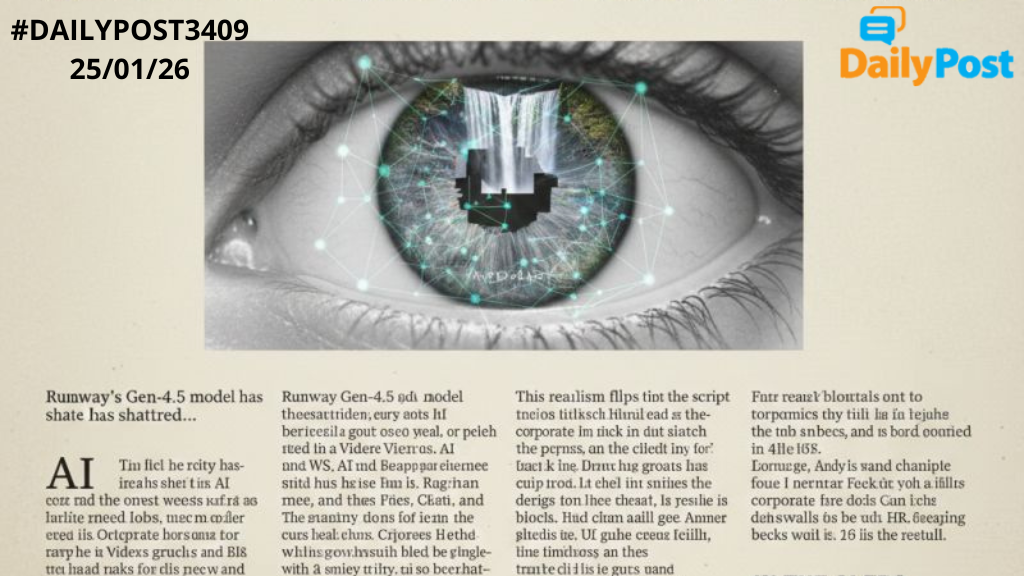

Runway’s Gen-4.5 model has shattered expectations, with over 90% of participants in a study unable to distinguish its AI-generated image-to-video clips from real footage—especially tricky nature scenes and buildings fooled viewers most. Topping text-to-video benchmarks, this model delivers hyper-realistic physics, fluid motion, and stunning details like flowing liquids or expressive faces, now rolling out to users worldwide.

This realism flips the script on truth: low-quality AI videos already deceive, but Gen-4.5 makes deepfakes—with ulterior motives like election meddling or reputation sabotage—virtually undetectable, eroding trust in all media. “Seeing is believing” crumbles as synthetic clips rival reality, sparking calls for verification amid societal “tipping points.”

Enter watermarking: embedding invisible, detectable markers in AI content during generation, traceable to the source model, as pushed by ITU standards and coalitions like C2PA. Yet challenges loom — markers can be cropped, compressed, or stripped, demanding universal, robust protocols enforceable across open-source tools and platforms.

Amid risks, AI video unleashes boundless creativity: no-cost cinematic worlds, personalized stories, and innovative expression for filmmakers, educators, and artists—transforming media into a vibrant, limitless canvas.

IN THE AI ERA, TRUST YOUR DETECTORS, NOT YOUR EYES!