DailyPost 779

WEB SCRAPING

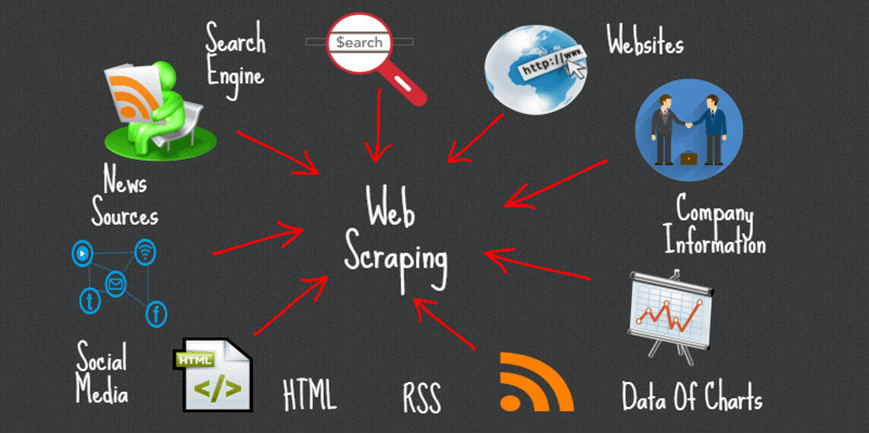

It is ironical that we have all the information and data available on the world wide web, still we are not able find the best suitable for our needs. The quality of Google search and lots of near manual means leaves lots to desired. Multimedia is another challenge. Diffbot recently launched is touted to be 500 times the size of Google and works on the concept of Knowledge Graph; a structured methodical way of handling www information / data. “Web scraping or web data extraction is data scraping used for extracting data from websites.”

Manual web scarping can be done at the expense of humungous time and effort, the term in tech parlance connotes to automated processes implemented using a bot or a web crawler. A central local database or spreadsheet is created put of data available on the web, which at a later stage can be used for analysis or retrieval. A web scraping software will automatically load and extract data as per your requirements. It can be custom build for a specific website or can be generic in nature. The generic softwares have been difficult to set up and use. There seems to be a steep learning curve involved.

The genesis of this tool is as old as the Internet itself. While www was born in 1989, the World Wide Web Wanderer was created in June, 1993 to measure the size of the of the World Wide Web. In December, 1993, the First crawler-based web search engine came into existence. In 2000, first API crawler came and in the year 2005, Visual web scraping software came to the market. In technical terms, a web scraper, is an Application Programming Interface, API, to extract data from a website. Amazon AWS, Google and some others provide web scraping tools and services free of cost to it’s users. Some websites use methods to disallow web scraping by detecting and disallowing bots from crawling their pages.

Web scraping provides immense value from the web data with least amount of effort to suit any requirement of the individual user or the enterprise. The ingenuity of the user / enterprises to get data as per requirements and to use it most suitable / manner is at the crux to making the best out of this tool. DOM parsing, computer vision and natural language processing capablities assistance is also taken for this purpose.

WEB SCRAPING CAN IMMENSLY ENHANCE THE UTILITY OF WORLD WIDE WEB FOR YOU.

Sanjay Sahay